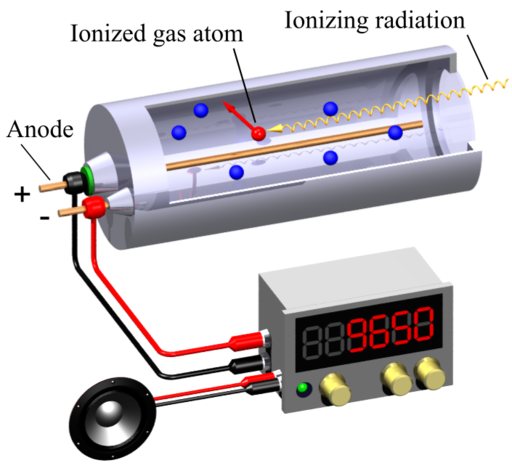

A Geiger-Muller (GM) counter is an instrument for detecting and measuring ionizing radiation. It operates by using a Geiger-Muller tube filled with gas, which becomes ionized when radiation passes through it. This ionization produces an electrical pulse that is counted and displayed, allowing users to determine the presence and intensity of radiation.

Svjo-2, CC BY-SA 3.0, via Wikimedia Commons

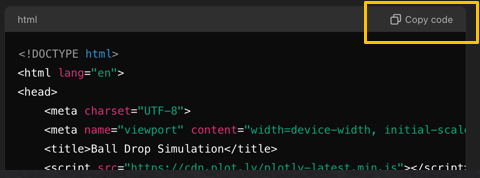

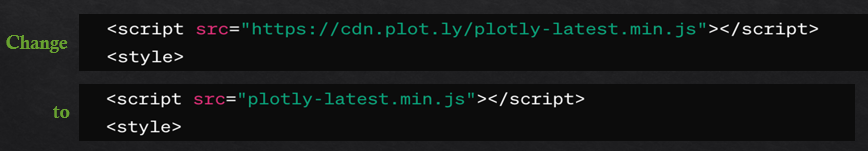

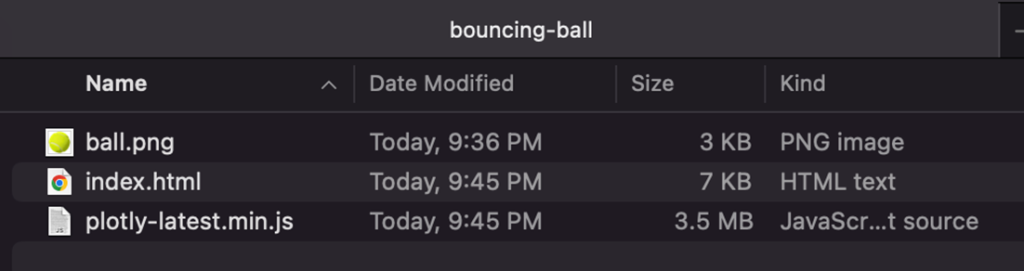

This simulation (find it at https://physicstjc.github.io/sls/gm-counter) allows students to explore the random nature of radiation and the significance of accounting for background radiation in experiments. Here’s a guide to help students investigate these concepts using the simulation.

Exploring Background Radiation

Q1: Set the source to “Background” and start the count. Observe the count for a few minutes. What do you notice about the counts recorded?

Q2: Why is it important to measure background radiation before testing other sources?

Investigating a Banana as a Radiation Source

Q3: Change the source to “Banana” and reset the data. Start the count and observe the readings. How do the counts from the banana compare to the background radiation?

Q4: How do the counts per minute (CPM) for the banana vary over time? Is there a pattern or do the counts appear random?

Exploring a Cesium-137 Source

Q5: Set the source to “Cesium-137” and reset the data. Start the count and observe the readings. How do the counts from Cesium-137 compare to both the background radiation and the banana?

Q6: What do the counts per minute (CPM) tell you about the intensity of the Cesium-137 source compared to the other sources?

Understanding the Random Nature of Radiation

Q7: By looking at the sample counts, can you predict the next count value? Why or why not?

Q8: How can you use the background radiation measurement to correct the readings from the banana and Cesium-137 sources?